I began the week, and the second semester, on Monday morning by heading to the studio (though I had to wait until it was unlocked, so I couldn’t have as early a start as usual). I started my working by copying the necessary scripts from the first semester’s Unity prototype to a new project, so that I could keep the work separate and a bit more tidy. I then removed sections of the scripts’ code that were quoted-out or erroneous (due to the removal of unnecessary scripts), as well as any references to abandoned camera systems (as we’d settled on an isometric one, and the system for it was already in-place). All of this was a bit tedious, but I’d say it was necessary.

In the afternoon, I started implementing an idea I’d come up with after handing-in my work last semester. At the time, the camera system allowed me to independently enter minimum and maximum rates of change for the horizontal angle, the vertical angle, the orbit radius and the vertical offset of the orbit’s origin. However, having to independently enter them meant that getting them to synchronise (ending the transitions simultaneously) would be a case of trial and error. Therefore, I thought that I could instead allow myself to determine the two numbers that every total value difference would be divided by to determine the minimum and maximum rates of change, so that the transitions would all automatically line-up. Implementing this meant making total distance calculations for each changing value (which I’d already determined) only occur once, so that they could be stored as variables and used not only for determining the current increase rate (as they were at the time), but also to determine the time-based rates of increase. I then had to add the actual calculations to determine the minimum and maximum increase rates, and then change the script managing the camera’s values to take the minimum and maximum increase times, as opposed to all of the individual rates.

After this, I added the necessary objects from the previous version of the project to the scene (which meant disregarding the previously-used camera target objects), and made sure their parenting, components and component values were set properly. Then, I wanted to address a bug that had been affecting the isometric camera system at the end of the previous semester, where the camera would start spinning wildly in the opposite direction to that which it was meant to be spinning in and would continuously increase the speed at which it was orbiting. I noticed that it was occurring when the horizontal angle value was moving across the threshold between π and -π, so I first tried changing the calculation for appropriately setting the horizontal angle value when crossing the threshold. When that didn’t work, I realised that I had to invert the subtraction for finding the difference between the current and target horizontal angle values, such that the current value would consistently change in one direction, and once I did this for one direction across the threshold, I had to do it for the other. This fixed the bug, and now my isometric camera system was working as intended.

Once that was out of the way, I thought about how I could handle the beat timer for world traversal in the game, as well as how to check whether the player presses the jump button within an acceptable window around each beat/half-beat. Then, Adam came and asked to speak to me in his office. He spoke about a pretty exciting opportunity for an internship with the design and branding company, Moving Brands, and how he felt that I had the skill set that they were looking for. I won’t act like the internship is a certainty, but it does seem like a pretty exciting opportunity for work post-university (and would be a really cool way to be getting money in while I’m working on indie game projects in my free time), so I’ll see how it goes. I really appreciate Adam talking to me about it, too. Soon after this, I headed home, and spent a large portion of the evening reading through most of the pre-reading material for Friday’s tutorial session, concerning brands and branding (though I’d started feeling quite unhappy in the evening, and was thus having to read with things on my mind, which is always a bit tricky).

I was still quite unhappy on Tuesday morning, but I got to university early and finished off the pre-reading for Friday’s session. Before any sessions started, I started working on the beat management system, creating the script and defining some variables (though not getting any further until later that day). When James’ first TechShop session of the semester began, he spoke to us about how the sessions, and the semester in general, would be handled. He told us that he’d expect to speak with each student/team during every session, but we’d otherwise be free to get on with our work, with him available to help where he can. He also said that the year is about showing-off our portfolios, so he wants to make sure we’re able to make the games that we want to make. James told us that he intended on promoting a culture of cross-year skill set sharing, if such a thing is necessary, so that years can help each other to achieve their project goals by filling-in any gaps during development. He also said that, should anyone be relatively light on work around Easter, he’d try to arrange some internships for us (though I imagine my workload will be fairly full throughout the entire semester).

Once James had finished introducing the semester, we had a team meeting to discuss aspects of the project that still needed to be determined. First, George and Bernie decided that they needed to start a line of communication between them so that their 3D asset creation could be consistent. Then, George said that we’re going to need a rough schedule for the semester, so that we can keep on-track to actually finish the project, and he said he’d talk to Adam about it. We determined that my work could be relatively flexible, generally working on mechanics, features and getting everything working, as well as making small prototypes for Bernie’s ideas, and that George will check-in to find out what I’m working on. We discussed how, as well as making designs for George and Bernie to make models from, Ella can be working on potential 2D assets and UI aesthetics (with Bernie designing the functionality of the UI). George and Bernie then determined that they needed to be working on a lot of assets in a relatively short amount of time, so that they can work on other elements as well, and reiterated that they need to work on consistently replicating Ella’s and each other’s visual styles. George said that, since we didn’t have an active member of the team dedicated to it, his biggest concern for the project was music, and said that we can come up with ideas for music for George to sample, so that the samples can inform the work of whomever we outsource the music to, though we’d need a good pipeline for this process. Then, we discussed how we’d need branding- and marketing-related stuff, as well as how animations will need to be designed at some point before they’re developed.

After our team discussion, we returned to the computer room to have a discussion as a team with James. I passed him the notes I’d taken during the team discussion, and he told us that we seemed to be all over the place. He said that prototyping mechanics before a solid one is found is wasted development time (which may differ from how Adam sees it), and suggested that I work with Bernie on mechanic design, though that idea kind of fizzled-out. James asked how important a musical understanding would be in our synchronisation interactions, wondering whether the mechanics were going to mimic a technical aspect of music or use music as the context of a more abstract synchronisation. He was wondering why we were worrying about having a dedicated musician on the team, as if the music acts as a ‘facade,’ he said we could use stock music (which Bernie disagreed with, and I agree with Bernie), and if the player is expressing themselves through the musical instrument, they become the team’s dedicated musician. James offered a simple example of the player picking an appropriate response to what each character plays, and when Bernie said that there isn’t such thing as a ‘correct’ response (to which I’d agree), James said that there technically is. He told us that we need to determine the main interaction mechanic soon, and that we need to decide what our game really is, either a creative tool or a ‘facade,’ and if our game is the latter, we have to decide how music can be shaped around the mechanic. He said that a good game is like a Triforce of mechanics, aesthetics and narrative, and that we need to get that mechanics portion. James said that he’s available to help with designing mechanics, and that we can’t have big, empty environments, so we need to make sure the transitional areas contribute to the overall story of our game. After he told the others to knock-out environments quickly before moving onto characters, he told us that we’re big dreamers, and that that may be a development weakness. Finally, he told us to make the cake before we decorate it.

After speaking to James, we moved to the main studio, and I continued working on the beat management script while the others spoke about concept art and 3D modelling. For the beat management script, I programmed it such that a counter value is constantly increased by an increase rate value (factoring-in delta time), and upon equalling or surpassing the beat length multiplied by the number of beats, is subtracted by that product (to reset the counter). Then, each time the remainder of the beat counter when divided by the beat length is less than or equal to a success threshold divided by two, or greater than or equal to the beat length minus the success threshold divided by two, a Boolean determines that, if the player were to jump, they’d be doing so on-beat, resulting in a successful jump. Once I’d programmed that script, we went for lunch, and I started feeling terrible.

After having lunch, I headed to the room of the Reflective Journal session upstairs, and managed to feel even worse, feeling awful and incredibly depressed throughout the session itself. The first Reflective Journal session began with Adam speaking about what’s expected of us for the module and its submission, as well as the kind of things we can write about for it. Then, after he said about how the module’s journalistic pieces will be published in the class’ publication for the degree show, Adam handed-out a number of books and show publications for us to look at for ideas for the layout of our publication. After this, Sarah, Venny and Samantha were delegated as the trio to work on the publication, and then we came up with ideas for questions to cover in our pieces for the module, with Adam going around the room to ask people what their ideas were. Right before he asked me, I thought of what I could look into, being cultural and language barriers in games, which I decided upon based on how our game is trying to circumvent these barriers by using music as a universal language. I’ll need to come up with a proper question soon, however, as we don’t have long to work on the module itself before submission. With people having shared their ideas, Adam tried to give everyone pointers as to what to look into, telling me to speak to Vanissa (since her PhD apparently concerned culture in games). I still felt terrible after the session, and after being helped to feel better (which I greatly appreciated), I headed home.

Feeling somewhat better, I decided to get some work done in the evening. As I’d only programmed the beat management script earlier in the day, I tried testing whether it worked. However, Unity was refusing to load the current version of the script, only loading a version that lacked subroutine calls during each frame update. To fix this, I switched the Start call to an Awake call, and saved the change. With some value changes in the Inspector window, I saw that the script was working as intended. Once I saw that it was working, I remembered that I was not only factoring beats into jumping, but also half-beats, so I added some code to set a Boolean to true when within a success threshold around a half-beat. Then, I briefly increased the rate of acceleration when moving to make movement slightly snappier, as per George’s request. After this, I headed to bed.

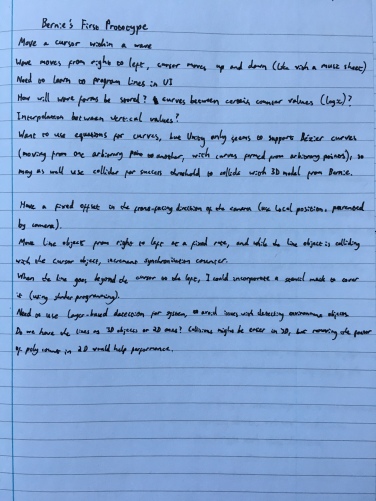

I headed to the studio early on Wednesday morning, and carried-out some CBT-related work. For a while, I was stuck thinking about how to handle jumping and moving on slopes with Unity’s physics, and whether to ditch physics entirely with vertical movement (so that I have more control over it). After some time, Adam came into the studio and spoke to us. He told Bernie to tell me to make some mechanical prototypes, so Bernie said that I could make a prototype for moving a cursor vertically to keep it within the path of a moving curve. Adam promptly made a sketch of the mechanic on a large sheet of paper, and I said that I’d need to learn how to program lines in 2D or on the UI layer in order to get the prototype working. After having lunch, I created a new Unity project for the prototype and thought of different ways in which I could handle it. I was hoping to be able to graphically map curves from equations, either as 2D objects or on the UI layer, so that I could check whether the player was scoring mathematically in code. However, when I looked-up Unity’s curve rendering implementation, I found that smooth curves can only really be achieved with Bézier curves (which are disgusting and not at all what I was after). Alternatively, Unity has a built-in line renderer, though that would mean the curves that are created won’t be particularly smooth (with a higher sample count being needed for a smoother curve). For the time being, we decided that Bernie would make a 3D model of the curve, and I’d simply use collision (with a collider on the player’s cursor and a mesh collider covering the curve) to check whether the player is scoring. I realised that if I worked with 3D curves and wanted the curve to disappear as it passed the player, I’d have to learn about stencil masks, being shaders that obscure areas of objects without obscuring other objects (confining objects within stencils). After researching, I headed to my CBT session.

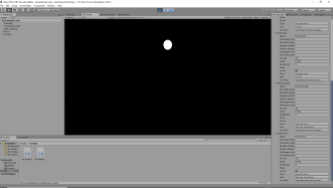

When I returned from CBT, I continued to take notes about the prototype and consider its implementation. After a while, I added the curve models that Bernie made to the project, and gave them different materials to serve their different purposes (one was made to be lit, so that the lit upper half and shadowed lower half would contrast to create a target line in the middle, and the other was made to be unlit and appear flat). I also gave the scene a black skybox, so that the curves would contrast nicely against it. After this, I started to feel quite unhappy and headed home, feeling progressively worse throughout the evening. I didn’t find myself able to get any more work done that day.

On Thursday morning, I headed to the studio early again to continue with Bernie’s mechanical prototype. I briefly changed the rotation of the scene’s directional light source to better light the pointed curve. After this, I created two spherical objects for the player’s cursor, giving them different materials to match those of their respective curve styles, and parented the two spheres and the two curves under a cursor parent object and a wave parent object, respectively. Then, I spent a bit of time positioning the cursors and waves, such that they’d be seen properly and evenly during gameplay. Next, I created a wave management script (though didn’t do much with it quite yet), and created a layer for the the cursors and waves to reside within, making it so that they’d only collide with themselves and each other. After doing this, I started to feel genuinely terrible and incredibly depressed.

After I’d spent some time doing basically nothing, Adam came into the studio and spoke to me, mostly about seeking further help with my depression through the university. I wasn’t really in any frame of mind to act upon his suggestions, but I appreciated it nonetheless. In the afternoon, James came into the studio, and we discussed how I was handling the implementation of Bernie’s mechanical prototype. He suggested that it should be a 2D solution, and that rather than relying on collisions, I could have a mathematical equation solution, with lines interpolated between fixed points defined by a counter value being injected into an equation. Essentially, this was the kind of thing that I considered doing earlier, using Unity’s line renderer to accomplish the interpolation, though I’m aware that it won’t result in a smooth curve. Still, it’s probably worth trying. James also said that I could generate a smooth curve by putting whichever mathematical equations I use into a graphical calculator program, though I know that that would also require some trial and error with synchronising the visual representation of the curve with the correct values each frame. Before leaving, he offered to help with maths, coding, or being someone to talk to about life and stuff, and that’s all very much appreciated.

After speaking with James, I added the subroutine to the wave management script for cycling between visual modes for the cursor and wave objects, enabling and disabling the game objects completely (including their mesh renderers and colliders) when necessary. I also remembered to add the Boolean that allows the cycling button to be pressed more than once. After that, I added RigidBody components to the two ball objects (since collisions require at least one colliding object to have a RigidBody component), and froze their positions and rotations within the components when I realised that they were initially being moved by collisions when starting the scene. Then, I spent a bit of time looking at the links that Adam gave to me about Moving Brands on Monday, just so that I could find out more about them. After this, I headed home.

During the evening, I started writing the script for cycling between modes for vertical cursor movement, as well as applying the movement. I had three movement modes in mind, with the first being based on the vertical position of the analogue sticks, the second based on the full 360° angle of the analogue stick, and the third based on the 180° angle between the stick’s position and its vertical extremities. During the evening we received an email from Sam Green, showing samples of his work and saying that he’d be up for working with us on the project, so I listened to the samples (they sounded good), gave the email to the others on Discord and responded to Sam. After replying to the email, it was late enough that I had to go to bed before continuing with the script, having only come up with the variables I needed.

I headed-in early again on Friday morning, and started by properly programming the mode cycling for the cursor. Afterwards, I remembered that the input axes needed to be set-up for the right analogue stick (and properly customised for the left one), so I sorted that out. After this, the tutorial session began, and Adam started by speaking about the plan for the semester. He showed us a (fairly rough, again) diagram of the module’s layout, and said that he’d be regularly checking-in with everyone to make sure the projects are on-track. The diagram can be seen below.

While Adam was speaking about the semester, Claire from Moving Brands arrived at the studio, and our branding-focused tutorial began. We initially looked at a promotional video for Resistance 3, discussing the elements of the video that conveyed the game’s ideas. After that, we looked at a few videos documenting the processes of Moving Brands’ design and branding projects. We then broke Sarah and Rubi’s game (Enchanted Kin) down into a series of words that we believed reflected its concepts and ideals, and gathered visual and aural research around the words in groups (I worked with Ella) to inform different aspects of the game’s potential branding. Teams are expected, at some point, to consider carrying-out a similar process for their projects. After this, we took a break for lunch.

After having lunch, I programmed the different behaviours for the cursor’s vertical movement, until the tutorial resumed when the lunch break was over. Adam then showed us a few websites for games and developers, just as the kind of thing to aim towards, and gave everyone feedback on their projects. He said that his main thoughts were that Ella needs to lead in terms of creative direction for the project, considering the feelings and experiences we want to create, so that we can galvanise as a team. He said that Bernie needs to determine the game’s mechanics (and possibly work on marketing the game afterwards), and that we need to avoid being too linear with the project, instead being iterative. After Adam spoke to us, I added the code to apply the vertical movement to the cursor’s parent object. After doing this, I made a few tweaks to the code to make it work properly, first doubling what the angle values were being divided by (as the cursor was breaching the top of the screen). When I decided that I wanted the third input method to correlate with the stick’s angle in the opposite direction, I tried inverting the angle aspect of the cursor’s position calculation, but then realised that this was the wrong thing to do, and instead inverted the vector that the stick position was being compared against for the angle. Then, for the second cursor movement method, I flipped the conditions for determining whether the angle should be above or below 180°, so that the angle affected the vertical position in the opposite direction.

After this, we spoke with Claire about our project, starting by explaining what we could about it to her. She brought up that point that if the player is moving around the world, moving on after each synchronisation, that’s inherently lonely for the player character (and, by extension, the player). Therefore, she asked us whether our character is meant to be experiencing loneliness. Claire said that using subtle storytelling and relying on player interpretation is nice, though she said that she didn’t quite understand who the protagonist is, so the avatar aspect of the protagonist may need to be a bit more obvious. She said that the lack of text is nice, and that we’re making a very thoughtful piece, but the goals before the end should probably be clearer. She suggested that we could suggest player progression by having the characters follow the player after synchronising, or we could do a synaesthesia thing of combining the gathering of characters with visual and aural changes in the environment. Notably, Claire said that a player is still a player, and has to know what to do in a game. To that end, she said that we’ll need something along the lines of a change in visuals to suggest progression, such as colours increasing in intensity over time, and that the visual cues we incorporate need to be explicit and obvious. She explained that games are inherently visual, so if we’re making one that’s all about sound, we can’t be losing people by being too vague. Finally, she also told us to make sure the camera doesn’t feel too prescriptive. During the discussion, Claire had also been taking note of key aspects of our game on a large sheet of paper, and that sheet can be seen below.

After we’d spoken to Claire, Adam asked me to show her some of my work, so I briefly showed her the initial mechanical prototype from the previous semester (where you have to synchronise one white bar with another, as per Adam’s request). He spoke to her about what he’d proposed to me on Monday, and she said about the people I’d need to speak to in London. It was appreciated, and I look forward to seeing what becomes of it, if something does happen. After I showed some of the others the progress I’d made with Bernie’s mechanical prototype, I programmed the wave to move from right to left and reset its position once it surpassed a certain point. I then created a script to store the player’s synchronisation score, and reset it when the wave’s position is reset. Then, I created a short script for the cursor spheres to increase the score while colliding with the wave (as long as it’s set to be moving). After that, I quickly created a circle sprite in Photoshop and imported it, putting it on the prototype’s UI canvas as a radial fill image. I then programmed the circle to be used as a score indicator, radially filled based on the current score value divided by an arbitrary maximum score value, and made it so that the current score can’t exceed the maximum score (by preventing it from being increased during collision if it’s equal to or greater than the maximum, and setting it to the maximum value if it exceeds it).

Now that the prototype was reasonably functional, Bernie and Adam tested it out. Adam pointed-out that, while playing in the third cursor movement mode, his thumb was constantly pressing on the stick (resting on the right side of the stick’s gate), which was causing RSI-related issues. Adam also asked me whether he could try the prototype with a slower lerp on the cursor’s movement, making it less snappy and responsive but less jerky and sensitive, and said that he preferred it after it was slowed-down. Finally, before we called it a day and left, Adam brought out his small OP-1 keyboard and tried to match playing it with the form of the wave that Bernie had made, in order to show that we don’t need to be prototyping with fully-formed music informing the forms of the waves, but we can instead see what sounds the waves inform. I recorded a video of this, and you can see it below (it was quite entertaining to watch).

During the evening, I added three small circles to the UI, gave them Canvas Group components and programmed them to be shown and hidden (by modifying the alpha values of the Canvas Group components) depending on which cursor movement mode is currently selected (showing the number of circles that correlates to the current mode). Then, I made it so that the first cursor movement mode works for both analogue sticks (like with the other modes), as Bernie noticed that it wasn’t working while testing. After sorting that out, the prototype was functional enough (for the time being, at least), and I headed to bed.

Since my parents were going to come to Winchester on Sunday (so that I could speak to them about how my depression’s progressed), I intended on spending a large portion of Saturday working on this blog post. However, I found that I just couldn’t get much done at all, and therefore made very little progress with it during the day. On Sunday, before and after meeting my parents (it was really nice to see them, and the meal and talk were nice, too), I worked on this blog post. I wasn’t able to finish it during the evening, however, and had to postpone that to today, being Monday.

On Monday morning, I headed to the studio early and continued writing this blog post, feeling quite unhappy. This was what consumed most of my day (reflected in how I haven’t finished it yet and it’s approaching midnight at this point), though other things did happen throughout the day. In the afternoon, I wrote-up and sent a reply email to Sam Green, telling him that we’d decided to welcome him onto the team and the project. We liked the samples he’d sent us, and felt like the project could really use someone of his skill set, so I look forward to seeing how the collaboration goes. Bernie also sent me a new wave model (with a visual representation of one-second increments behind it, which was greatly appreciated) to go alongside an audio track of a trumpet, which he also sent me. The idea was that I could work them into the mechanical prototype, though I wouldn’t be able to until this blog post was out of the way (which now means saving that for tomorrow). Also, in the evening, Sam replied to my email positively, though he did have a number of questions. Due to this blog post, I’ll have to save replying to him until tomorrow, but I’ll do my best to give him all of the information he needs.

Over the week ahead, I intend on the additions to the prototype that Bernie requested, as well as trying to experiment with bespoke vertical movement on top of the physics-based lateral movement to achieve my intentions with movement and jumping abilities being tied to the fixed beat of the background music. I’ll also try to at least slightly refine my focus for Reflective Journal, if not actually actively work on it (since I’ll need to start doing so at some point very soon). I may also try to approach Bernie’s prototype with a more mathematical solution, with lines drawn between points calculated by plugging a counter value and a number of offset values into equations (to determine y coordinates at numerous x values). We’ll see how it all goes, and whether I’m able to achieve everything I want, considering I’m prone to feeling terrible. Anyway, I’ll see you in next week’s post, which hopefully won’t be a day late.